When you #include a header from the C++ frontend, you can no longer assume that every aten operators are transitively included. This function is not intended for public use. If you have existing code that relies on it, you can find an equivalent function at torch.hub._import_module. Remove _module function that was mistakenly public ( #67990) aten/src/ATen/native/RangeFactories.cpp:19 # This warning will appear only once per process. # and will throw a runtime error in a future release. # UserWarning: Not providing a value for linspace's steps is deprecated # Works, but raises a deprecation warning This change ensures that the deepcopy operation on Tensor properly copies all the attributes (and not just the plain Tensor properties). You can check the blogpost that shows the new features here.īackwards Incompatible changes Python APIįixed python deepcopy to correctly copy all attributes on Tensor objects ( #65584) Distributed Data Parallel (DDP) static graph optimizations available in stable.functorch, a library that adds composable function transforms to PyTorch, is now available in beta.TorchData is a new library for common modular data loading primitives for easily constructing flexible and performant data pipelines.The new PyTorch 1.11.0 release is composed of over 3,300 commits since 1.10, made by 434 contributors. Along with 1.11, they released beta versions of TorchData and functorch. The newest stable release of PyTorch, version 1.11.0, has a number of new highlights including TorchData, functorch, Distributed Data Parallel (DDP) static graph optimizations, and more! Based on Torch, PyTorch has become a powerful machine learning framework favored by esteemed researchers around the world, and now adopted fully by Facebook.

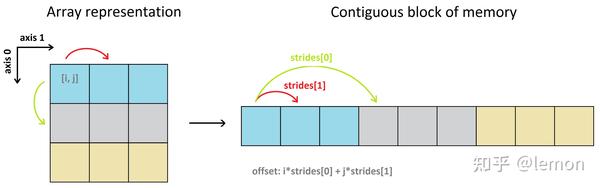

X2.PyTorch is a widely used, open source deep learning platform used for easily writing neural network layers in Python enabling a seamless workflow from research to production. RuntimeError: view size is not compatible with input tensor''s size and stride (at least one dimension spans across two contiguous subspaces). # strides cannot cut it anymore - we get an error X1.stride() # -> (1, 4) efficient stride representation can handle this Take a look at this simple example: # original tensor However, if you only change the shape (i.e., using view or reshape) you incorrectly "mix" the values from the two channels: A.view(1,2,3,3) If you correctly transpose and then reshape, you get the correct split into even and odd channels: A.transpose(1,2).view(1,2,3,3) Here's an example, with B=1, N=3 and C=2, the first channel has even numbers 0.16, and the second channel has odd numbers 1.17: A = torch.arange(2*9).view(1,9,2) See this answer, and this one for more details. In contrast, transpose and permute change the underlying order of elements in the tensor. Reshape and view only affect the shape of a tensor, but d not change the underlying order of elements. Transposing/permuting and view/reshape are NOT the same! So in the end, yes you end up with two different results: C shares the same data as A, while B is a copy and has a different memory layout.

building a view on top of a tensor doesn't change its memory layout, it's an abstraction level to better manipulate tensors. By layout I mean memory arrangement, I am not referring to its shape which is irrelevant: > B.flatten() In terms of memory layout A has the following memory arangement: > A.flatten() Here swapping axis=1 and axis=2 comes down to a batched transpose (in mathematical terms): > B = A.transpose(2, 1) If you take a smaller example, something we can grasp more easily: > A = torch.rand(1, 4, 3) What it comes down to is a contiguous memory data buffer. The view at the end is just a nice interface for you to access your data but it has no effect on the underlying data of your tensor. The difference between B and C is that you have used anspose which means you have swapped two axes, this means you have changed the layout of the memory.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed